Origins

The story starts at Princeton University, in the basement of the engineering school. The Princeton Engineering Anomalies Research (PEAR) lab, founded by Robert Jahn in 1979, spent decades testing whether human intention could influence the output of electronic random number generators. PEAR published results suggesting small but statistically significant effects. The lab was controversial from the start.

Roger Nelson worked at PEAR for years. In 1998, he took the core idea and scaled it up. Instead of one person sitting in front of one machine, what if you spread dozens of machines around the world and looked for patterns during events that captured the attention of millions? That became the Global Consciousness Project.

The hypothesis was straightforward: when large numbers of people focus on the same event, the output of random number generators worldwide might show correlated deviations from expected randomness. Not huge effects. Small ones. But across enough devices and enough time, potentially detectable.

How the Network Works

The GCP network consists of hardware random number generators distributed across dozens of locations on six continents. These are not the pseudorandom generators used in software. They are physical devices that generate random bits from quantum-level electronic noise, specifically from tunneling effects in semiconductor junctions.

Each device, called an "egg," produces 200 bits per second. The bits are combined into trial values once per second. At its peak, around 60-70 eggs were active simultaneously. The data streams continuously to a central server, originally at Princeton, now maintained by the GCP team.

The eggs operate independently. They have no communication with each other. Any correlation in their output would, in theory, have no conventional explanation since each device relies on its own quantum noise source.

The Statistical Method

The analysis follows a standard pattern. First, an event is defined: a specific time window associated with an occurrence that engaged mass human attention. Ideally, the event is registered before the data is examined. This matters because choosing events after seeing the data introduces selection bias.

For each event window, the team calculates a chi-square statistic across all active eggs. This measures whether the combined output deviates from what chance alone would predict. The expected distribution is well-defined since the generators produce data with known statistical properties.

The cumulative deviation graph is the project's signature visualization. It plots the running total of deviations across all tested events. If there were no effect, this line would wander randomly around zero, like a random walk. The GCP reports that the line trends consistently upward, in the direction that would indicate a real effect.

Over its full dataset of several hundred events, the project reports a combined p-value of approximately one in a trillion. That number sounds extraordinary. It means that if the null hypothesis were true (no effect), the chance of seeing results this extreme would be about 10^-12.

What the Data Shows

The September 11, 2001 analysis is the most cited result, and the most debated. The GCP team reported significant deviations beginning in the hours surrounding the attacks. Some versions of the analysis show the deviation beginning before the first plane struck, which has drawn particular attention and particular skepticism.

Other events that produced notable results include major earthquakes, New Year celebrations (the midnight rollover tracked across time zones), presidential elections, and large-scale meditation events. Not every tested event shows a deviation. The claim is that the aggregate trend across hundreds of events is significant.

The project has tested events across a wide range of categories: disasters, celebrations, political milestones, spiritual gatherings. The overall trend in the cumulative deviation graph goes consistently in the predicted direction. Individual events vary. Some show strong effects, others show nothing, and a few go in the opposite direction.

Criticism and Debate

The GCP has drawn serious criticism from statisticians and physicists. The concerns are worth understanding because they apply to any research at the edges of established science.

Event selection. Critics argue that the criteria for defining events are too flexible. Even with pre-registration, the choice of which events to test, and how to define the time window, can influence the results. A major disaster might be defined as starting at impact, or at the first news reports, or "when the world became aware." Each choice produces a different statistical result.

Multiple comparisons. When you test hundreds of events across dozens of data streams, you expect some apparently significant results by chance. The look-elsewhere effect is real. The GCP team uses cumulative analysis to address this, but critics argue the cumulative approach has its own problems.

No mechanism. Mainstream physics has no framework for how human consciousness could influence quantum processes in electronic devices located thousands of kilometers away. Without a plausible mechanism, extraordinary statistical claims require extraordinary evidence. Many physicists consider the results more likely to reflect subtle methodological issues than a genuine physical effect.

Replication. Independent replication is difficult because the GCP network is unique. Other researchers have attempted similar experiments with different setups and produced mixed results. The field lacks the kind of consistent, independent replication that would shift consensus.

The GCP team has responded to these critiques in published papers. They argue that their pre-registration protocol addresses selection bias, that the cumulative deviation is too consistent to be a statistical artifact, and that the absence of a mechanism does not invalidate an empirical result. The debate continues.

What EarthBeat Shows

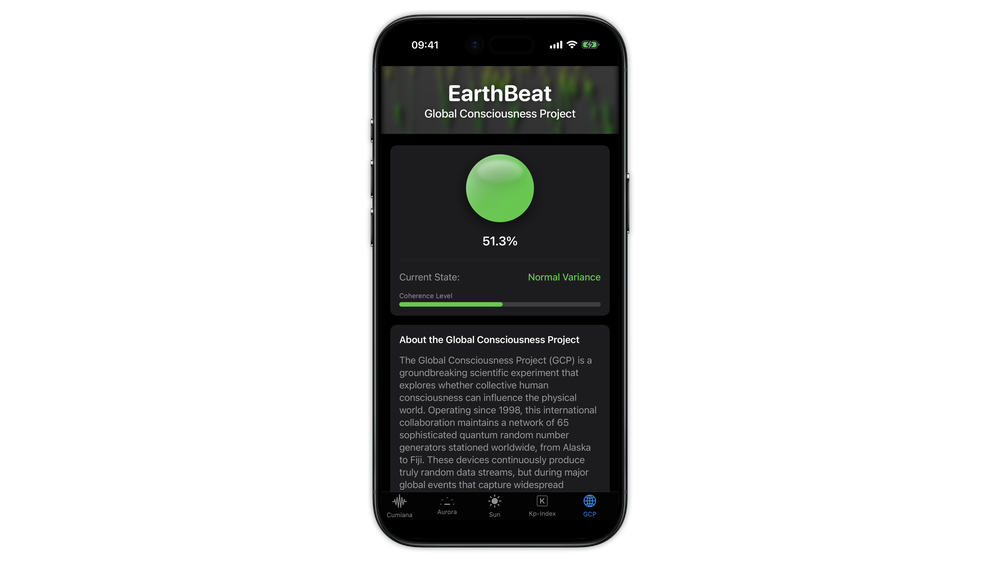

The GCP dot in EarthBeat displays the current network variance metric from the Global Consciousness Project. It reflects whether the worldwide network of random number generators is producing output that deviates from expected randomness at any given moment.

The dot changes color based on the deviation level. Green means the network output is close to what chance would predict. Yellow indicates moderate deviation. Red signals that the network is producing results that fall significantly outside expected bounds.

This is a real-time snapshot of the network's statistical state. It does not, by itself, prove or disprove anything about consciousness. It shows you what the data is doing right now and lets you draw your own conclusions.

Summary

The Global Consciousness Project raises a genuine scientific question about the relationship between collective human attention and physical random processes. The data has been collected, the statistics have been published, and the debate continues. EarthBeat displays the GCP network state in real time through its 3D dot indicator.